PV2TEA: Patching Visual Modality to Textual-Established Information Extraction

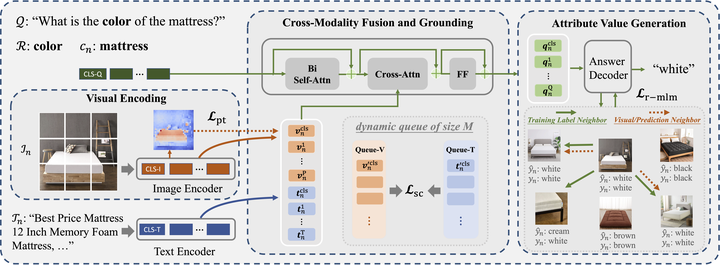

The overview of PV2TEA model architecture with three modules, where each of them is equipped with a bias reduction scheme.

The overview of PV2TEA model architecture with three modules, where each of them is equipped with a bias reduction scheme.Attribute value extraction, as a fundamental task in e-Commerce services, has been extensively studied and formulated as text-based extraction. However, many attributes can benefit from image-based extraction, like the product color, shape, pattern, among others. The visual modality has long been underutilized, mainly due to multimodal annotation difficulty. In this paper, we aim to patch the visual modality to the textual-established product attribute extractor. The cross-modality integration faces several unique challenges: (C1) product images and textual descriptions are loosely paired intra-sample and inter-samples; (C2) product images usually contain rich backgrounds that can mislead the prediction; (C3) weakly supervised labels from textual-established extractors are biased for multimodal training. We present PV2TEA, an encoder-decoder architecture equipped with three bias reduction schemes: (S1) Augmented label-smoothed contrast to improve the cross-modality alignment for loosely-paired image and text; (S2) Attention-pruning that adaptively distinguishes the product foreground; (S3) Two-level neighborhood regularization that mitigates the label textual bias via reliability estimation. Empirical results on real-world e-Commerce datasets demonstrate up to 11.74% absolute (20.97% relatively) F1 increase over unimodal baselines.